Obra

View on GitHubWhat is Obra?

A Chrome extension that collects your browsing history in real-time, groups pages into thematic sessions, and makes everything searchable and conversational through an AI-powered dashboard.

Today, the backend stack runs locally on the user's machine (extension, API, and database), but LLM inference still uses cloud-hosted providers through external API calls. A core milestone is to move toward a local-first setup as much as possible to better preserve sensitive browsing data.

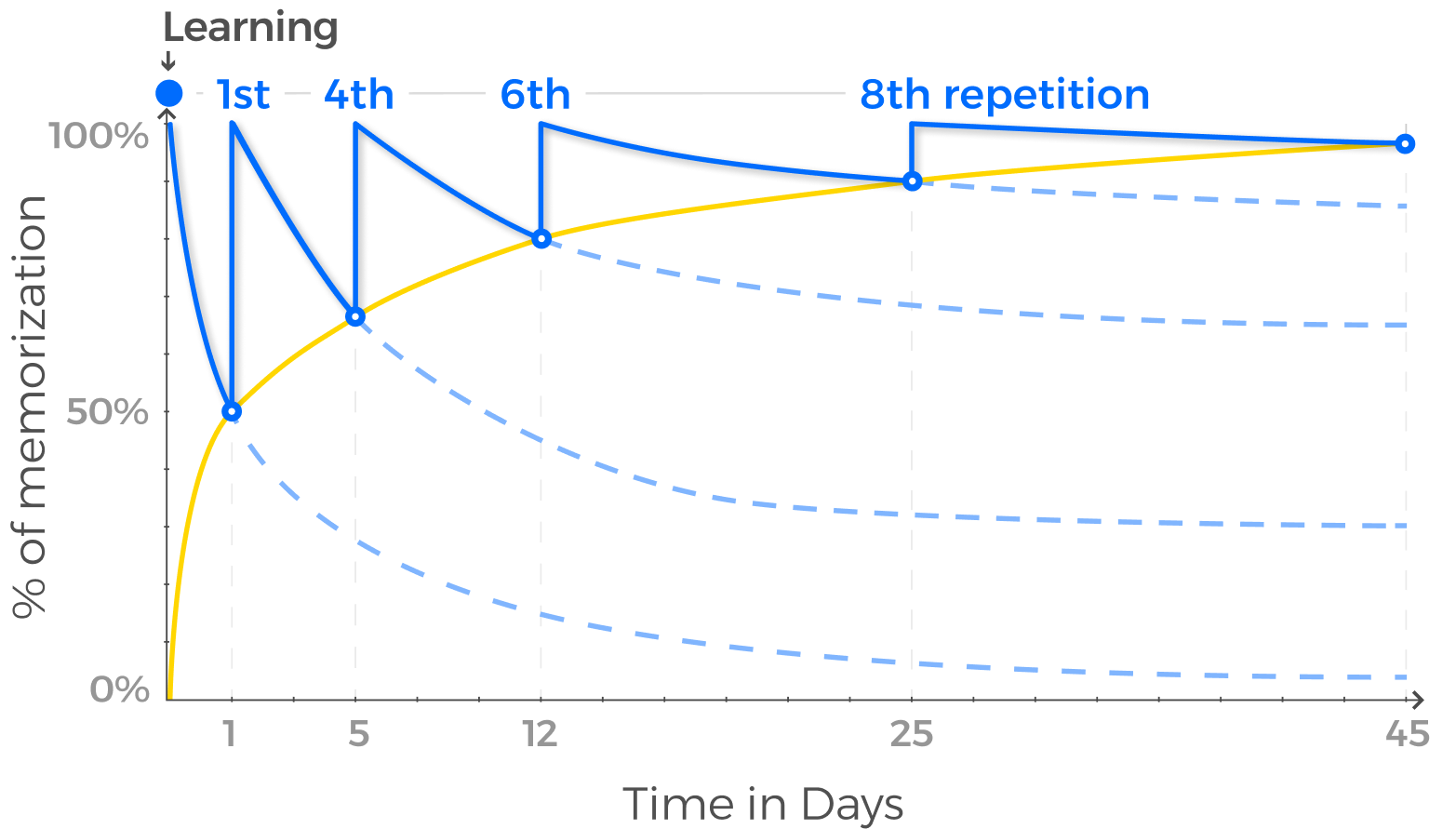

The end goal is a spaced repetition system driven by browsing behavior: detect what topics you've been exploring, track when you last revisited them, and prompt review at the right time based on the Ebbinghaus forgetting curve. Topic tracking and quizzes are the next milestone.

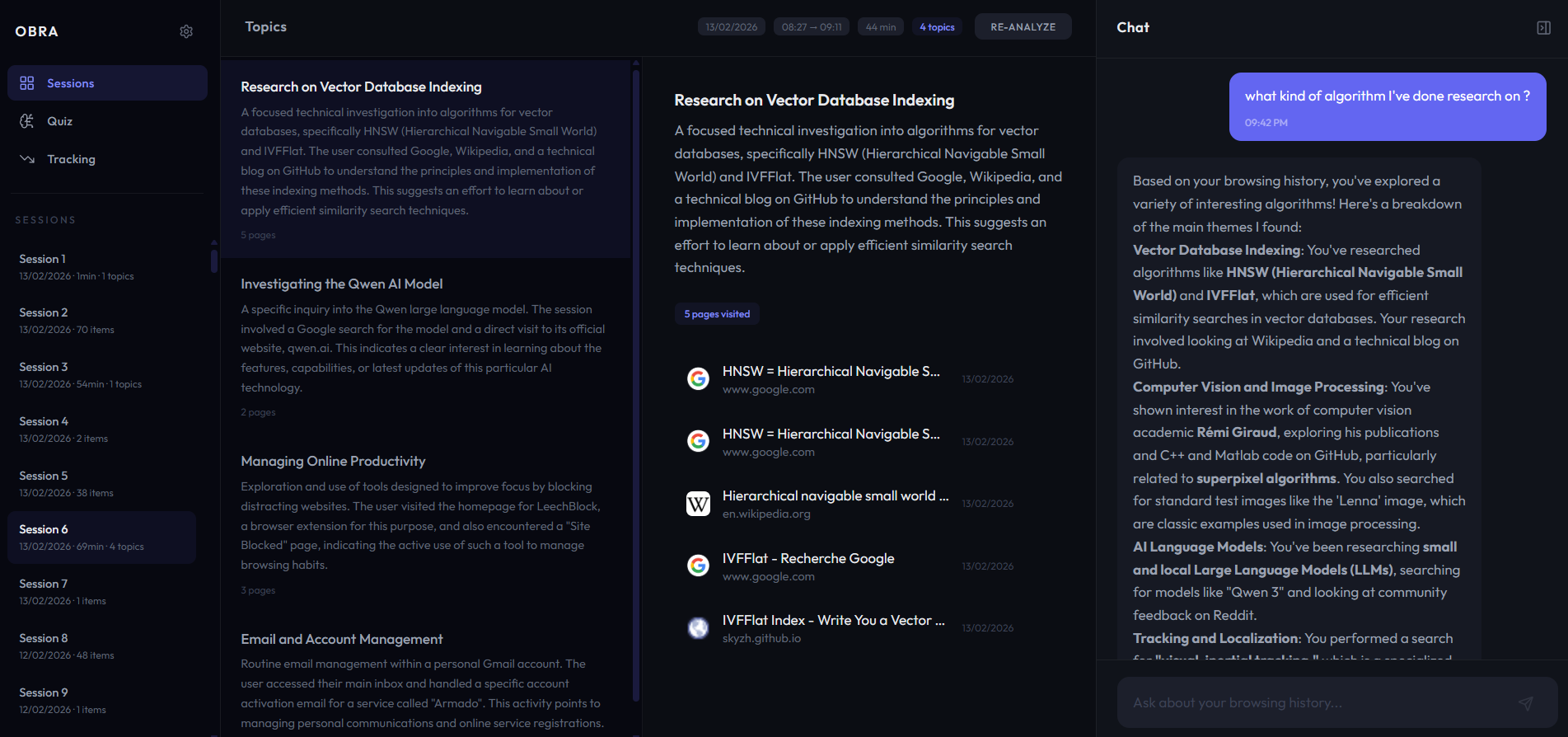

This screenshot demonstrates how the history data processing backbone enables the extraction of relevant semantic information from sparse and noisy history. This stands as the prerequisite for the upcoming features that will provide differentiation for this application.

This screenshot demonstrates how the history data processing backbone enables the extraction of relevant semantic information from sparse and noisy history. This stands as the prerequisite for the upcoming features that will provide differentiation for this application.

Live demo

Current features

History collection and session detection

A service worker listens to chrome.history.onVisited and collects browsing activity continuously. Pages are preprocessed (URL features, date formatting, noise filtering). Activity is split into sessions based on inactivity gaps (30 min) or max duration (90 min), derived on-demand from stored items (event-sourcing). Closed sessions are sent to the backend automatically.

Thematic clustering

The backend sends sessions to a cloud LLM API that identifies thematic clusters. Pages are assigned to clusters via embedding cosine similarity. Each cluster gets a name, a description, and links to the original pages. Results are cached in PostgreSQL so re-opening a session is instant.

Two-stage semantic search

768-dimensional vector embeddings are stored via pgvector with HNSW indexes, on both clusters and individual history items. Search runs in two passes: find relevant clusters first, then fetch matching pages within them. Supports date range, domain, and keyword filters.

Agentic chat

A chat sidebar lets you talk to your history. The backend uses LangGraph to orchestrate an agentic loop with native tool calling, currently exposing semantic history search. Asking "what algorithms have I researched?" triggers a search across clusters, then synthesizes a structured answer with source links.

Monitoring

Structured JSON logging, per-request tracing via contextvars, LLM token/cost tracking, and an in-memory /metrics endpoint.

Architecture

Current architecture (local backend stack + cloud LLM calls):

Chrome Extension (MV3)

├── Service Worker (history collection, session lifecycle)

└── Dashboard (sessions, search, chat)

│

▼ (localhost)

FastAPI Backend (modular)

├── assistant

├── session_intelligence

├── recall_engine

├── learning_content

├── identity

├── outbox

└── shared

│

▼

PostgreSQL + pgvector

├── Sessions, Clusters, History Items

└── HNSW vector indexes

│

▼ (HTTPS API calls)

Cloud-hosted LLM provider(s)Tech stack

- Extension: Chrome Manifest V3, vanilla JS service-oriented architecture

- Frontend: React, TypeScript, Vite, Tailwind CSS, Zustand

- Backend: Python, FastAPI, SQLAlchemy, pgvector, LangGraph

- Database: PostgreSQL with pgvector (768-dim embeddings, HNSW indexes)

- LLM: Provider-agnostic (Google Gemini default, OpenAI, Anthropic, Ollama)

- Infra: Docker Compose

What's next

- Local-first LLM milestone: reduce dependence on cloud-hosted inference by supporting local model execution where possible, to better preserve sensitive history data.

- Single-user local mode: the current codebase still has multi-user management and Google OAuth from an earlier iteration. Since the app runs locally and handles sensitive data, this is being reworked into a simpler single-user setup with no authentication layer.

- Topic tracking: detect key topics across sessions, track time since last visit, visualize retention decay (forgetting curve), send smart reminders.

- Quiz & flashcards: auto-generate review cards from browsing content, triggered when a topic starts fading.